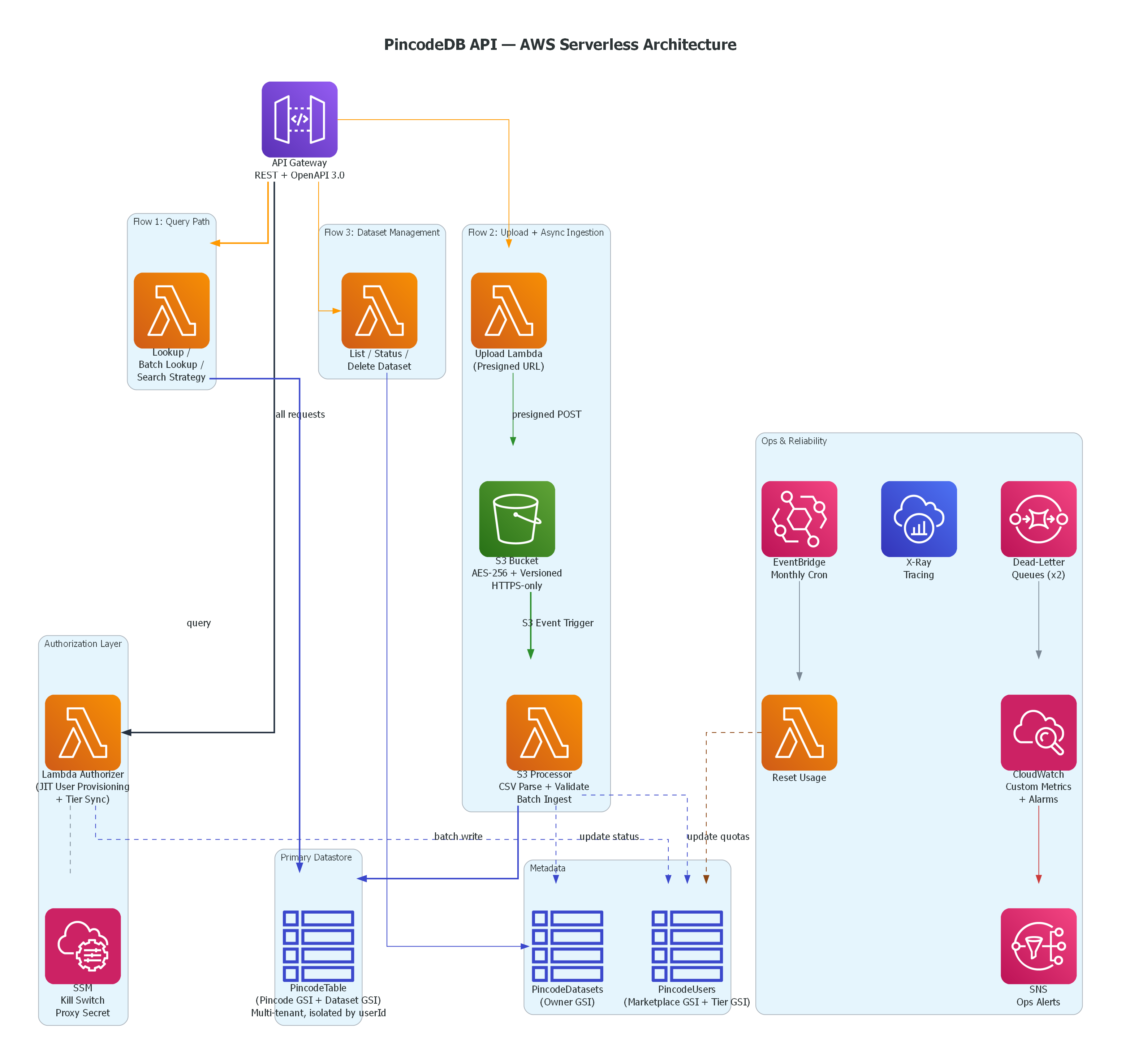

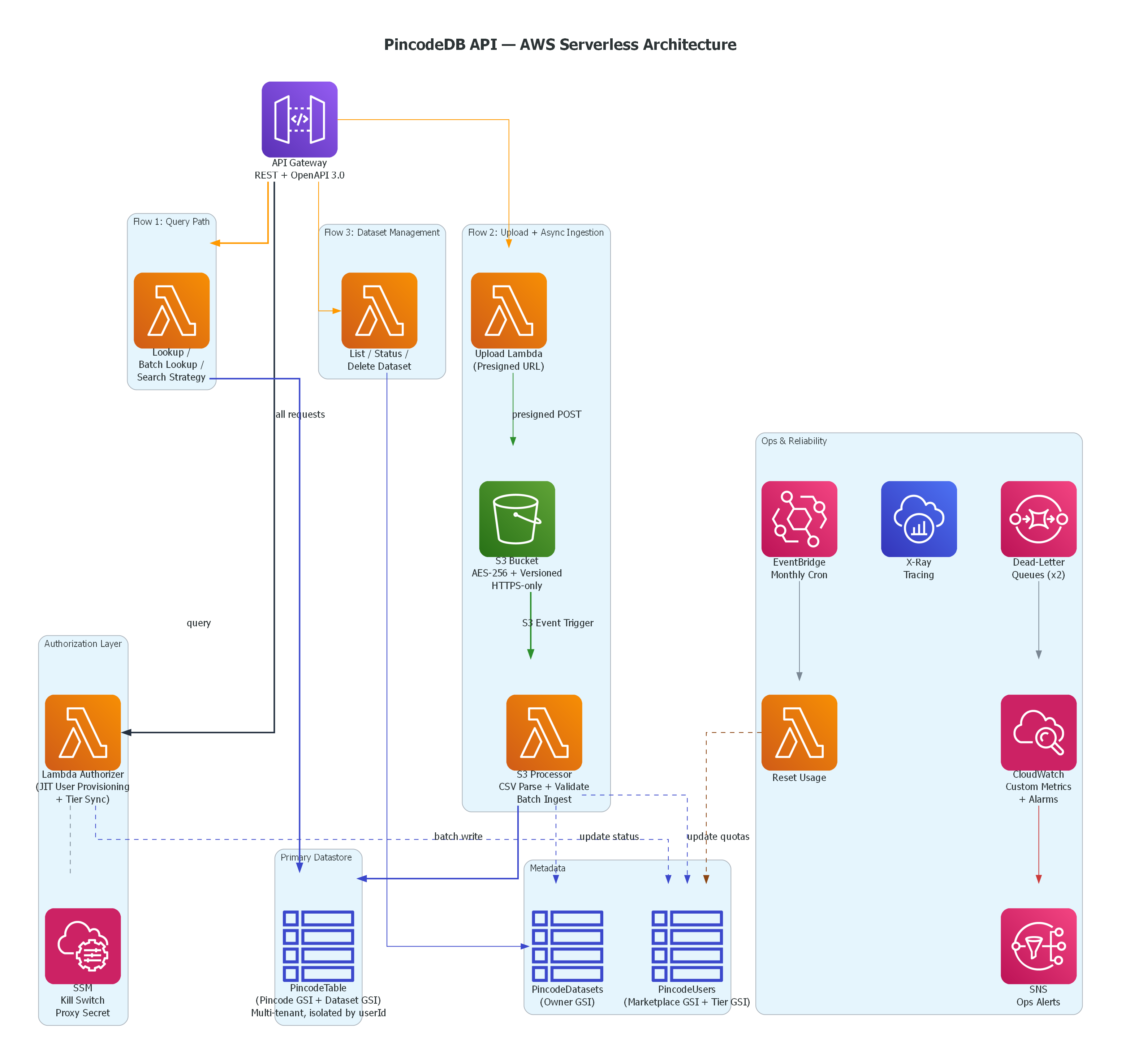

100% serverless on AWS. Entire infrastructure as code in a single SAM template.

A serverless, multi-tenant backend that turns any CSV of postal codes into a private, queryable REST API. Upload the CSV. Get a live API back in seconds. No server provisioning, no database setup, nobody else's data mixed with yours.

POST /postal-code/uploaddatasetId.

The CSV never passes through API Gateway — no 10MB limit.

ObjectCreated event. The processor Lambda

parses the CSV, validates the schema, and batch-writes to DynamoDB.

Quota counters update atomically.

GET /dataset/{id}/status → SUCCEEDED.

Then GET /postal-code/search?pincode=110001

— your private data comes back instantly.

Companies — especially logistics, e-commerce, and fintech — maintain their own

pincode master data in spreadsheets. These aren't raw post office records; they

carry business-specific columns like delivery_sla,

risk_score, is_serviceable, zone_type.

When a backend team needs this data as an API, the realistic options are: build and host your own lookup service, or use a public pincode API that knows nothing about your internal columns.

PincodeDB is option 3: upload the CSV, get a live queryable API in seconds. Private, isolated, yours.

internalUserId.

Three GSIs cover three access patterns. Cross-tenant isolation is enforced

structurally at the query — not by application-level filtering that could be bypassed.

One table, all users, zero leakage.

/pincode-api/enabled.

Set it to false and the authorizer denies every request globally,

instantly — no deployment, no code change. The authorizer reads this on every

request before any user-facing work happens.

customData map in DynamoDB and returned as-is.

A logistics company uploads pincode,sla,risk,is_serviceable with

no schema pre-registration required.

LatencyMs, RequestsOk,

RowsIngested, RequestedPins via aws-embedded-metrics.

X-Ray distributed tracing on all functions. SQS DLQ depth alarm wired to SNS

for ops alerting.

def handler(event, context):

# Every request: validate → lookup → provision/sync

_validate_proxy_secret(event['authorizationToken'])

marketplace_id = _extract_marketplace_id(event)

current_tier = _get_tier_from_headers(event)

user = users_table.get_item(

Key={'marketplaceId': marketplace_id}

).get('Item')

if not user:

# First request — create user on the spot

user = _provision_user(marketplace_id, current_tier)

elif user['tier'] != current_tier:

# Subscription changed — sync immediately

user = _sync_tier(user, new_tier=current_tier)

# Downstream Lambdas receive userId + tier in context

return _build_allow_policy(

principal=user['internalUserId'],

context={

'userId': user['internalUserId'],

'tier': user['tier'],

}

)def is_api_enabled(ssm) -> bool:

"""

One SSM parameter. Instant global shutoff.

No deployment. No code change.

"""

param = ssm.get_parameter(

Name='/pincode-api/enabled'

)

return param['Parameter']['Value'] == 'true'

def query_pincodes(table, user_id: str,

pincode: str) -> list:

"""

Single-table multi-tenancy.

All tenants share one table — partition key

is internalUserId. Cross-tenant leakage is

impossible at the DynamoDB query level.

"""

return table.query(

KeyConditionExpression=(

Key('internalUserId').eq(user_id) &

Key('pincode_officename').begins_with(

pincode

)

)

)['Items']| Function | What it does |

|---|---|

| Authorizer | JIT user provisioning + tier sync on every request. Validates RapidAPI proxy secret. |

| Lookup | Single pincode search — queries user's own datasets with optional public fallback. |

| Batch Lookup | Up to 100 pincodes in one call. Same isolation guarantees as single lookup. |

| Search Strategy | Custom priority chain: specific dataset → all private → public. Growth+ only. |

| Upload | Generates presigned S3 POST URL with tier-based size limits enforced by policy. |

| S3 Processor | Parses CSV, validates schema, batch-writes to DynamoDB, updates quotas + status. |

| List Datasets | Returns all datasets owned by the authenticated user. |

| Get Status | Returns processing status for a specific dataset: PROCESSING / SUCCEEDED / FAILED. |

| Delete Dataset | Deletes records, metadata, and adjusts quota counters. BUSINESS+ only. |

| Reset Usage | Scheduled monthly via EventBridge — resets paid tier usage counters. |

| Method | Path | Auth | Description |

|---|---|---|---|

| GET | /health | Public | Health check |

| GET | /postal-code/search | Required | Lookup a single pincode — all datasets or a specific one, with optional public fallback |

| POST | /postal-code/batch-search | Required | Batch lookup up to 100 pincodes in one call |

| POST | /postal-code/search-strategy | GROWTH+ | Chained search with custom priority order: specific dataset → all private → public |

| POST | /postal-code/upload | Required | Get a presigned S3 POST URL to upload a CSV dataset |

| GET | /datasets | Required | List all uploaded datasets for the authenticated user |

| GET | /dataset/{datasetId}/status | Required | Check processing status of a dataset |

| DELETE | /dataset/{datasetId} | BUSINESS+ | Delete a private dataset and all its records |

| Tier | Max File | Max Rows | Max Datasets | Dynamic Schema |

|---|---|---|---|---|

| FREE | 50 KB | 250 | 1 | No |

| BUSINESS | 10 MB | 1,000,000 | 50 | No |

| GROWTH | 20 MB | 5,000,000 | 200 | Yes |

| ENTERPRISE | 50 MB | 25,000,000 | 1,000 | Yes |

internalUserId. Ownership checks on delete and status operations. No way to reach another tenant's data.DeleteObject denied on raw uploads via IAM./pincode-api/enabled. Set to false → instant global shutoff. No deployment, no code change.ruff — fast Python linter, runs on every push.black — enforced code style, fails CI on diff.pytest with moto — ~40 tests, no real AWS calls.sam validate — template validation against CloudFormation schema.moto to mock AWS services (DynamoDB, S3, SSM) in-process.

Covers happy paths, auth failures, quota enforcement, encoding edge cases,

S3 duplicate event handling, and pagination.

template.yaml. sam deploy --guided creates everything.

DeletionPolicy: Retain

in the SAM template. Deleting the CloudFormation stack does not delete

production data.